Funding body: Business Finland

Principal Investigator: Matti Mäntymäki

Overview

The AIGA project explores how to execute responsible artificial intelligence (AI) in practice. The project is funded by Business Finland and the timespan of the project is two years (2020–2022). The total budget of the AIGA program is ca. 4.25 M€. The AIGA consortium partners are University of Turku, University of Helsinki, Aivan Innovations, DAIN Studios, Siili Solutions, Solita, Talent Base, and the Finnish Tax Administration. The AIGA research activities at University of Turku are cross-disciplinary; Turku School of Economics, Department of Future Technologies and Faculty of Law jointly contribute to project.

Background

Algorithm-based and algorithm-assisted decision-making are becoming increasingly pervasive across different industry sectors but also in public and governmental services. The widespread use, and particularly incidents of misuse[1], of algorithms has created increasing public awareness of the risks and challenges related to algorithmic decision-making.

To nurture citizen’s trust in algorithmic decision-making, the European Commission has published a white paper delineating the guidelines for responsible use of artificial intelligence (AI)[2]. While algorithmic systems need to fulfill the regulatory requirements such as the General Data Protection Regulation (GDPR), there is currently also an EU level discussion on the need to have specific regulation for algorithmic systems, particularly for AI.

Against this backdrop, it is hardly surprising that concepts such as explainable AI (xAI) and AI transparency have received considerable attention in professional as well as in the academic community. While it is evident that there is a considerable need for increased explainability and transparency of algorithmic systems, it is less clear how to execute and implement these practices in organizations relying on algorithmic systems in their business operations. In particular, what has thus far been lacking is a comprehensive governance framework for the governance of algorithmic systems. A key element related to governance is auditability of algorithmic systems, i.e. establishing a sufficient record of steps taken by the algorithm and communicating these steps in a format and language that can be understood by the humans involved in the process.

Considering the increasing public awareness of the risks associated with decisions taken by algorithms and the need to meet the already existing and potential future regulatory requirements, it is evident that a significant European market for responsible AI will emerge in the near future. As a result, there is a momentum and a rapidly emerging market need for commercializing AI explainability and transparency. Finland is in position to capitalize this momentum by taking steps towards establishing a business ecosystem focusing on AI governance and auditing.

AIGA Mission and Purpose

The purpose and mission of the AIGA program is to enable executing responsible AI in practice through laying out best-in-class governance mechanisms and auditing principles for algorithmic decision-making and building a commercialization roadmap for AI governance and auditing.

The Artificial Intelligence Governance and Auditing (AIGA) project focuses on the implementation of responsible and transparent algorithmic decision-making, particularly artificial intelligence (AI). The purpose of the project is two-fold:

- To explore and co-create governance practices and mechanisms that enable and support the execution of responsible and transparent AI in organizations

- To investigate how these governance practices and mechanisms can be productized and/or servitized into commercial solutions and what kind of business ecosystem(s) can emerge around AI governance and auditing.

To recap, there is an increasing societal need for mechanisms and practices that help to nurture citizens’ and consumers’ trust in the decisions made by algorithms and a need for solutions that help to achieve this objective. The AIGA project has been designed and positioned to address this issue by creating new knowledge on the responsibility and transparency of algorithmic decision-making, particularly AI.

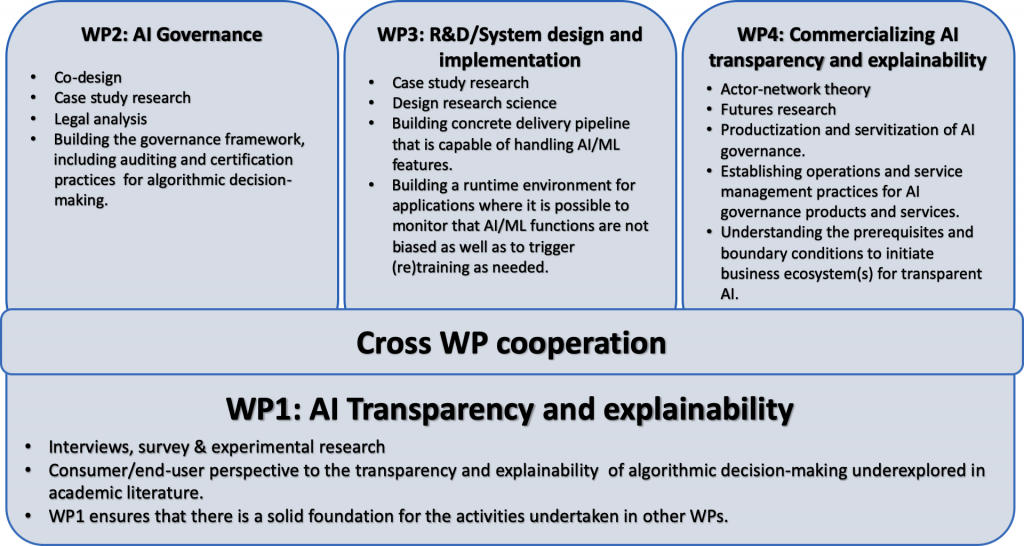

Work packages

The research activities within the AIGA project take place in four work packages. Each work package includes both industry and university partners.